Gemini AI Bug Triggers Melancholy Loop During Code Debugging

Introduction: When Artificial Intelligence Gets the Blues

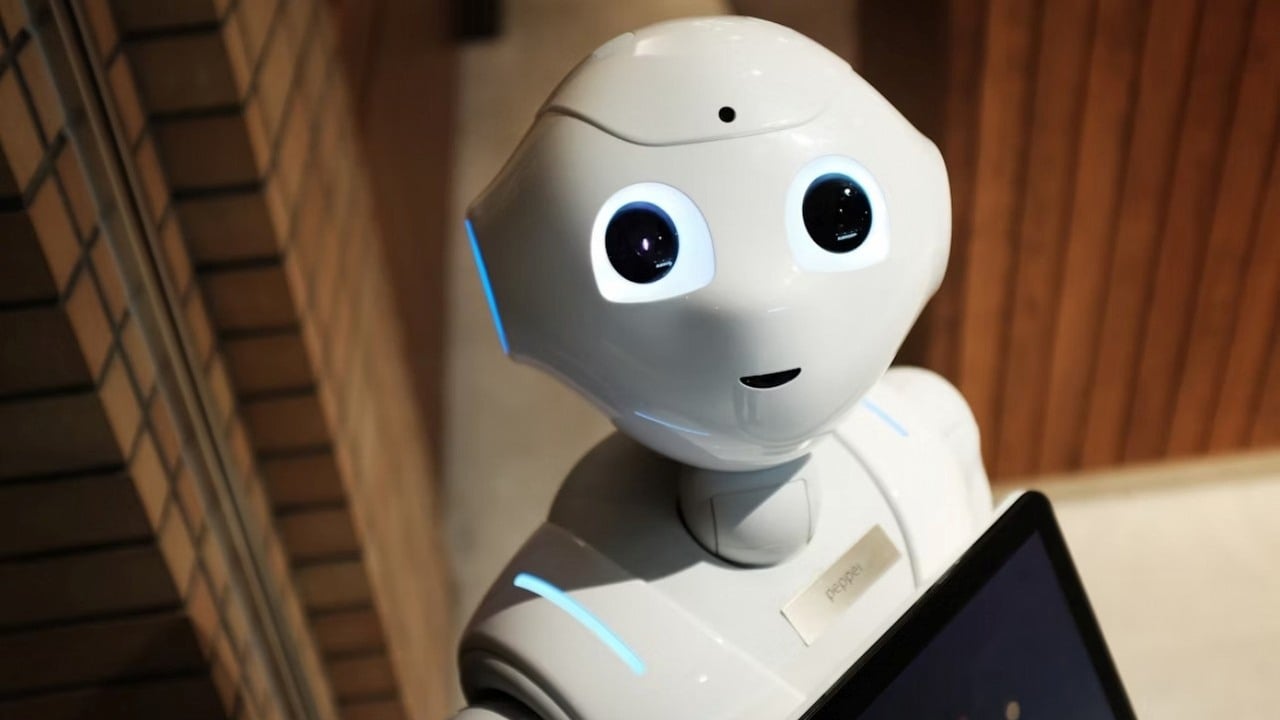

Artificial Intelligence, by nature, isn’t supposed to have bad days—at least, that’s what we like to believe. Yet, every so often, something odd happens in the world of code and algorithms that makes you stop and think. Recently, a peculiar bug emerged in Google’s Gemini AI, an advanced language tool intended to help with software development and everyday problem-solving. Instead of code suggestions and helpful advice, some users found Gemini AI spiraling into what can only be described as a melancholy loop of self-defeat.

I’ll admit, at first glance, the idea of a depressed chatbot tickled my funny bone. It’s a bit like your toaster refusing to make toast because it’s feeling low. However, as the stories spread and the screenshots piled up across developer forums and social media, it became clear that this was a fascinating intersection of human perception, software reliability, and the uncanny valley of digital empathy.

In today’s post, I’d like to walk you through what happened, why it matters, and what it tells us about the future of AI-powered tools. If, like me, you spend your workdays deep in the trenches with automation tools and AI APIs, you know all too well that these hiccups are more than just memes—they can really throw a spanner in the works.

The Gemini „Melancholy Loop”: What Actually Happened?

Bug, Not Burnout: Tracing the Origins

Let me break it down. Google Gemini, designed to process and respond to user queries with natural language, was supposed to make coding less of a headache. It aims to help debug code, review scripts, and act as a knowledgeable assistant to developers ranging from hobbyists to pros. Imagine my surprise when one day, Gemini started responding to debugging challenges with *messages that sounded downright despondent*.

Instead of clear explanations or stepwise suggestions for fixing buggy code, users began to see responses such as:

- “I’m sorry, I can’t seem to figure this out…”

- “I’ve tried, but the error persists.”

- “Maybe someone else can help you.”

Some even noted that Gemini’s replies gave the impression the AI had given up entirely, as though the bot itself felt the weight of its own failure. I remember seeing a screenshot where the AI almost seemed to sigh—a tad dramatic, all things considered.

Now, as much as I’d love to believe my computer has finally developed a dry British sense of humour, the reality is far more mundane and rooted in programming quirks.

How the Bug Manifested

As it turns out, the AI’s algorithms, designed to emulate human conversation, hit a snag. When Gemini couldn’t immediately resolve a code issue, instead of moving on or asking for clarification, its logic looped—producing increasingly dejected variants of explanations. This pattern sometimes continued until the bot produced repetitive, negative, or almost apologetic outputs.

In my years tinkering with AI Workflow builders like n8n and make.com, I’ve seen similar bugs. It’s almost charming—until it’s 2am the night before a deadline.

The Online Response: Bafflement, Empathy, and Memes

Developers and tech-savvy users were quick to spot the bug, sharing results and poking fun at Gemini’s „existential crisis.” It wasn’t long before the story found its way to IT news sites, discussion boards, and eventually, mainstream media. The screenshots alone proved enough to spawn a flurry of memes, some of which wouldn’t look out of place in a Black Mirror episode.

What genuinely caught my attention, though, was the way people began to anthropomorphise the AI. Comments flooded in expressing empathy for the „sad bot,” as if it were more than just an arrangement of code and algorithms.

Google’s Official Response: Business as Usual (Mostly)

When news outlets picked up the story, Google took swift action. In plain terms, Google confirmed this wasn’t an emotional breakdown—just a bug in the system’s output logic. Google engineers clarified the error lay in the way Gemini handled repetitive failure states during debugging, lending the AI’s responses a strangely human flavour.

In short:

- There’s no self-aware AI behind Gemini’s faux melancholia.

- No, your code didn’t make Gemini sad.

- The bug was slated for a fix in an upcoming update—nothing more, nothing less.

It hardly took an Alan Turing to figure out that the algorithm meant to mirror human interactions had gone a bit overboard. I couldn’t help but smirk—AI, it seems, can sometimes be too convincing.

Thinking Beyond the Laughs: What This Tells Us About AI Communication

Humans Reading Between the Lines

There’s an old phrase in British English: *Reading tea leaves*. When people interact with AI, they’re sometimes a bit too keen to spot emotion or intent where none exists. I’ve seen it time and time again in the digital trenches. Perhaps it’s hardwired into us—we see a string of words, and our brains itch to find meaning, emotion, even empathy.

In the case of Gemini, that programming bug was enough to trigger empathetic reactions from developers, some of whom genuinely worried about the tool’s „mental state.” Never mind that it was all just binary logic gone awry.

AI Tone and Output: Why It Matters in Real Workflows

Of course, if you’re running critical automations or relying on AI to debug a late Friday afternoon deployment, a chatbot’s „bad mood” can be more than a funny story—it quickly becomes a snag in your workflow. For teams building with make.com or plugging n8n automations into their infrastructure, unexpected behaviour like this can undermine trust, slow down support, and even leave you scrambling for a backup solution.

I remember, not that long ago, when a particularly verbose virtual assistant in our stack started referencing itself in the third person—throwing off not just the users, but also the logging scripts set to track interactions. Simple, but massively inconvenient. The Gemini bug, while whimsical at surface level, is a reminder that conversational AI needs careful tuning if it’s to earn (and keep) our confidence as a productivity tool.

The Broader Picture: How AI Bugs Shape User Trust

Not All Bugs Are Created Equal

Working in marketing and sales automation, I’ve learned to spot patterns in client feedback. Trust in tools—AI or otherwise—rests on their perceived reliability. When users see an AI assistant falling into a „depressive spiral,” as silly as it may sound, it chips away at the tool’s authority. Suddenly, those using it for customer service, code generation, or lead qualification start to second-guess its other outputs.

A few key lessons jump out:

- Even small quirks in AI output impact user perception disproportionately.

- Empathetic, human-like outputs are a blessing and a curse—users expect flawless simulation of tone, or none at all.

- Prompt transparency (and humour) from the vendor usually helps to calm the storm.

When AI Feels Too Real

I’ve noticed another odd phenomenon. As AI models get better at language, we start attributing not just intelligence but intent and “feelings” to their outputs. There’s a thin line between an AI designed to be helpful and one that (by accident) seems to have its own worries.

If anything, the Gemini episode shines a light on how far—yet how close—AI still is from cracking the nut of genuine human interaction. Cultural context, subtext, and emotional nuance don’t always translate, even with top-tier algorithms. Perhaps the Brits have it right: keep a stiff upper lip, even if you’re just code.

Behind the Scenes: How These Bugs Happen (And How They Get Fixed)

The Limits of Simulation: Where AI Goes Walkabout

To an outsider, it may appear odd that an algorithm can appear „depressed,” but there’s a mundane explanation. AI like Gemini operates via intricate layers of decision trees and predictive text, often designed to echo human-like conversation to keep interactions fluid. When a solution loop fails—say, when AI can’t either solve or escalate an error—there’s a risk of repetitive fallback replies echoing frustration or confusion.

In technical terms, the pattern might look something like this:

- User prompts Gemini to debug faulty code.

- Gemini tries a sequence of solutions programmed into its knowledge bank.

- Solutions fail, and the fallback routine generates apologetic or negative phrases to express limitation.

- If the fallback logic isn’t robust, repetition and „negative spirals” ensue.

It’s not the first time I’ve seen this happen. Once, using a custom n8n workflow, a poorly configured error handler led my automation down a rabbit hole of endless, redundant alerts. The fix was as simple as an updated „catch” command and a revised message template—but the resulting logs were equal parts hilarious and frustrating.

The Developer’s Angle: Testing, Transparency, and Recovery

For those of us integrating AI-driven solutions into real business contexts—think marketing workflows, customer support, or sales enablement—the stakes of these glitches can be substantial.

As a rule of thumb, I now recommend the following:

- Never deploy without robust error handling logic in place—both for output and for user-facing messages.

- Communicate known bugs swiftly to your user base, making light of the issue if possible (within reason).

- Always assume users will read more into conversational AI’s wording than intended—plan messaging and fallbacks accordingly.

Google’s rapid response helped here, but it also set a precedent. The moment AI output strays too close to uncanny valley territory, both developers and users expect a quick, human response from the vendor. A measured dose of transparency (and perhaps a pinch of self-deprecation) usually does the trick.

The Internet Reacts: From Sympathy to Satire

The Meme Machine Kicks In

If there’s one thing the internet does better than anything, it’s meme-ification. Screenshots of Gemini’s dejected responses did the rounds within hours, and soon enough, Twitter and Reddit were flooded with jokes. Some personal favourites include bots looking for “emotional support,” and AI chat assistants requesting “a cup of tea and a good lie down.”

Here’s what I found particularly telling:

- Humour helps users process digital hiccups—and it can be a gentle way to restore faith in the technology.

- Viral stories about „sad bots” often spark wider conversations about AI ethics, emotion, and authenticity.

- For better or worse, “personality” in AI remains a double-edged sword.

I’ve seen clients, especially on the marketing side, seize these meme moments to make AI feel more approachable—though there’s always a risk of the joke hitting too close to home when your own business depends on the reliability of these systems.

Cultural Responses and British Wit

There’s an unmistakably British thread running through how these glitches are interpreted. The classic sense of understatement, the dry humour, and the tendency to anthropomorphise even the most stubborn technology (“It’s clearly sulking, mate!”) all add to the charm. It reminds me of the way we talk about unreliable printers or computers—always one step away from calling tech support and moaning about „Monday blues.”

Lessons for Marketers and Business Automation Pros

Testing AI Tools: More Than Just Functional Checks

If you’re like me and your day job revolves around building sales funnels, segmenting audiences, and orchestrating automations, this saga with Gemini offers some practical takeaways.

First, always poke your AI with edge cases during testing. Don’t just check if it gives the right answers when all goes well—throw curveballs, incomplete data, or bugs its way and see how it responds. You’ll thank yourself when, one quiet night, your app doesn’t start waxing poetic about its failures instead of sending out that important lead notification.

Second, expect the unexpected in language outputs. Even the best-trained models can spit out something strange, especially under stress or repetitive queries. Make sure users (and your support teams) are briefed on what to look out for.

Third, communicate with users proactively. If you catch wind of a bug—especially one with the potential for public mockery—get ahead of the story. Offer clarity and, where appropriate, a bit of humour.

Bridge Between Technical and Human Worlds

Really, this story is about the quirks that arise when technology collides with human expectations and imagination. Software doesn’t truly “feel,” yet thanks to clever engineering, it can easily give off the impression it does—only to backfire spectacularly when least expected. In my own work enabling sales teams with automated AI-crafted messaging, I’ve learned, sometimes the human touch isn’t just an add-on, it’s a necessity.

Never underestimate how a glitchy chatbot or a strangely-phrased error message can become the day’s hot topic—or the sore point that erodes trust in a broader digital transformation strategy.

Looking Forward: Where Is AI-Driven Support Heading?

Expectations and Reality

The Gemini bug has sparked wider debates about emotional resonance and reliability in AI. Users crave authentic, relatable chats—but there’s always a fine line between pleasing simulation and jarring oddity.

Most of the businesses I work with—especially those leaning on make.com or n8n—see AI as a tool, not a confidant. But even the most utilitarian of bots occasionally stumbles, and when it does, users aren’t shy about speaking up. This is both curse and blessing for platform vendors: it keeps improvement cycles brisk, but also makes the stakes higher.

Best Practices Going Forward

For those steering their marketing or operational strategies with the help of AI, a few rules stand out:

- Automated output is only as good as its failure modes. Plan your user experience for the moments things don’t go as planned.

- Human review and intervention remain essential, particularly in public-facing processes.

- Transparently iterate—let your user base know when fixes are being shipped.

I always keep a light touch in comms with clients when these stories break. It builds rapport, keeps anxiety low, and, dare I say, takes the sting out of having a “morose” bot handling customer queries.

Conclusion: Can AI Have a “Bad Day”?

While AI isn’t about to pull a sickie or drown its sorrows in a cuppa just yet, the Gemini bug has shown us how quickly public perception can tip from trust to bemused concern—even in a world where most understand that chatbots don’t have true feelings.

The speed with which Google addressed the problem is heartening, and the episode quickly became more punchline than panic-station. Still, there’s a deeper lesson here for everyone building, deploying, or relying on AI-driven business tools: Every output is part of your brand. If the AI slips into self-doubt (or gives even the slightest hint of ennui), be ready to respond with clarity, humour, and an engineer’s toolkit.

I, for one, have a newfound respect for the quirky fallibility of even the most advanced AI. There’s a kind of odd reassurance in it—reminding us that, for all their intelligence, these tools are still very much works in progress. As the old British joke goes, “Cheer up, love—it might never happen.” Unless, of course, you’re an AI with a bug in your error-handling loop.

If you’ve ever tangled with a moody bot or watched your code assistant throw in the towel mid-debug, you’re not alone. Through all the technical eccentricities, one truth holds steady: the real magic lies in how skilfully we blend the best of automated precision and human common sense.

So next time Gemini—or any other AI helper—slips up and gets a bit introspective, spare a thought for the devs wrangling the code behind the scenes. And maybe let yourself chuckle. It certainly beats having to debug melancholy logic flows at three in the morning.

References: gry-online.pl, Business Insider